After a mishap with some Steam drivers that caused me to reinstall Pop-OS, I decided to make my box a bit more resilient. I noticed that my Pop-OS install didn't have a recovery drive. This is a very nice feature if you don't want to go hunting for a USB if your root filesystem ever got corrupted.

Normally you get the Recovery partition out of the box with Pop-OS. But I had done a custom install. You don't get a Recovery partition if you don't specifically add it. So I had to jump through a few hoops listed in this article here. But that article needed a decent example of a two things:

- running the pop-os upgrade tool

- mapping the correct UUIDs in the recovery.conf and boot loader entry

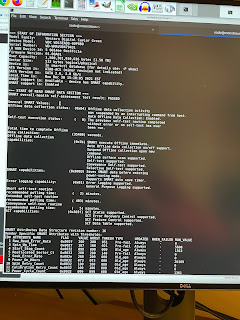

Here are the mappings, shown here with colors to help identify what goes where:

Here is the upgrade command:

Dec 25 12:03:52 pop-os com.system76.PopUpgrade.Notify.desktop[3621]: checking if pop-upgrade requires an update

Dec 25 12:03:52 pop-os pop-upgrade[3762]: [INFO ] daemon/mod.rs:389: initializing daemon

Dec 25 12:03:52 pop-os pop-upgrade[3762]: [INFO ] daemon/mod.rs:749: daemon registered -- listening for new events

Dec 25 12:03:52 pop-os pop-upgrade[3794]: pop-upgrade was already not on hold.

Dec 25 12:03:52 pop-os pop-upgrade[3762]: [INFO ] daemon/mod.rs:1099: updating apt sources

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:1 https://dl.google.com/linux/chrome/deb stable InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:2 http://apt.pop-os.org/proprietary jammy InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:3 http://apt.pop-os.org/release jammy InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:4 http://apt.pop-os.org/ubuntu jammy InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:5 http://apt.pop-os.org/ubuntu jammy-security InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:6 http://apt.pop-os.org/ubuntu jammy-updates InRelease

Dec 25 12:03:52 pop-os pop-upgrade[3856]: Hit:7 http://apt.pop-os.org/ubuntu jammy-backports InRelease

Dec 25 12:03:53 pop-os pop-upgrade[3856]: Reading package lists...

Dec 25 12:03:53 pop-os pop-upgrade[3762]: [INFO ] daemon/mod.rs:1010: performing a release check

Dec 25 12:03:53 pop-os pop-upgrade[3762]: [INFO ] daemon/mod.rs:1017: Release { current: "22.04", lts: "true", next: "22.10", available: false }

Dec 25 12:03:53 pop-os pop-upgrade[3762]: [INFO ] release_api.rs:58: checking for build 22.04 in channel nvidia

Dec 25 12:03:54 pop-os systemd[1]: pop-upgrade.service: Deactivated successfully.

Dec 25 12:03:54 pop-os systemd[1]: pop-upgrade.service: Consumed 1.066s CPU time.

Dec 25 12:04:03 pop-os systemd[1225]: pop-upgrade-notify.service: Control process exited, code=killed, status=15/TERM

Dec 25 12:04:03 pop-os systemd[1225]: pop-upgrade-notify.service: Failed with result 'signal'.

Dec 25 12:04:48 pop-os pop-upgrade[5183]: checking if pop-upgrade requires an update

Dec 25 12:04:48 pop-os pop-upgrade[5200]: [INFO ] daemon/mod.rs:389: initializing daemon

Dec 25 12:04:48 pop-os pop-upgrade[5200]: [INFO ] daemon/mod.rs:749: daemon registered -- listening for new events

Dec 25 12:04:48 pop-os pop-upgrade[5218]: pop-upgrade was already not on hold.

Dec 25 12:04:48 pop-os pop-upgrade[5200]: [INFO ] daemon/mod.rs:1099: updating apt sources

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:1 https://dl.google.com/linux/chrome/deb stable InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:2 http://apt.pop-os.org/proprietary jammy InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:3 http://apt.pop-os.org/release jammy InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:4 http://apt.pop-os.org/ubuntu jammy InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:5 http://apt.pop-os.org/ubuntu jammy-security InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:6 http://apt.pop-os.org/ubuntu jammy-updates InRelease

Dec 25 12:04:49 pop-os pop-upgrade[5221]: Hit:7 http://apt.pop-os.org/ubuntu jammy-backports InRelease

Dec 25 12:04:50 pop-os pop-upgrade[5221]: Reading package lists...

Dec 25 12:04:50 pop-os pop-upgrade[5200]: [INFO ] daemon/mod.rs:1010: performing a release check

Dec 25 12:04:50 pop-os pop-upgrade[5200]: [INFO ] daemon/mod.rs:1017: Release { current: "22.04", lts: "true", next: "22.10", available: false }

Dec 25 12:04:50 pop-os pop-upgrade[5200]: [INFO ] release_api.rs:58: checking for build 22.04 in channel nvidia

Dec 25 12:04:50 pop-os systemd[1]: pop-upgrade.service: Deactivated successfully.

Dec 25 12:04:50 pop-os systemd[1]: pop-upgrade.service: Consumed 1.000s CPU time.